Setting up a Kubernetes Cluster the Hard Way with kubeadm (GitOps Series, Part 1)

Published:

Setting up a Kubernetes Cluster the Hard Way with kubeadm (GitOps Series, Part 1)

In this post, I will walk you through my journey of setting up an over-engineered homelab based on Kubernetes cluster using kubeadm, while adhering to GitOps principles for deploying and managing cluster tools and applications.

The 3 parts of this series will cover the following topics:

Part 1: Setting up the Kubernetes cluster from scratch using kubeadm

- Setting up the Kubernetes cluster from scratch using kubeadm

- Installing kubeadm and joining worker nodes

- User creation using openssl

- Remote access to the cluster

Part 2: Deploying Core Infrastructure applications and cluster tools

- Setting up core infrastructure components and tools

- Adding taints and labels to the nodes

- Core

- Calico as CNI plugin via tigera-operator

- MetalLB as load balancer

- Metric-server for resource metrics

- Tools

- Enabling persistent storage via NFS and SMB provisioners

- External Secrets Operator for Kubernetes secret management

- Vault for secure secrets storage

- ArgoCD for GitOps continuous delivery

- Cert-manager for automated TLS certificate management

- Wildcard TLS certificate provisioning

- Nginx Ingress Controller with VirtualServer CRDs

- Kyverno for policy-based secret distribution

- Pi-hole for DNS management and ad-blocking

- External-DNS for automatic DNS record management

Part 3: Managing the cluster using App of Apps Principles

- Changing the cluster management approach to App of Apps

- Deploying homarr as dashboard for managing applications

- Deploying tailscale-operator for secure remote access

- Adding kyverno policy to generate tailscale ingress for VirtualServer

- Deploying immich as google fotos replacement

Without further ado, let’s get to it!

Part 1: Setting up the Kubernetes cluster from scratch using kubeadm

The first part of this series will focus on setting up a Kubernetes cluster from scratch using kubeadm. This process involves several steps, including configuring the control plane, joining worker nodes, and setting up essential tools for managing the cluster. The guide below will walk you through the entire process, ensuring that you have a solid foundation for your Kubernetes homelab.

Automation Available: Instead of doing the steps manually, you can checkout my k8s-setup repository which contains an Ansible playbook that automates the entire setup process. The playbook handles common setup, containerd installation, k8s tools, DNS aliases, and kubeadm initialization for both control plane and worker nodes.

Introduction

Kubernetes (k8s) is the de-facto standard for orchestrating containerized applications. Even though there are many managed Kubernetes services available (AKS, GKE, Hetzner, …), setting up a cluster from scratch was a great learning experience and one thing that you need to be able to pass the CKA exam.

Kubeadm is the tool that will get the job done. Once you install it on your hardware, it will take care of bootstrapping the control plane and worker nodes based on a yaml configuration. It will ensure that the kubelet is run as systemd service which in return will manage the static pods (kube-apiserver, kube-controller-manager, and kube-scheduler) located at /etc/kubernetes/manifests.

But we are getting ahead of ourselves, let’s start with the prerequisites and the setup process.

Prerequisites

Before we begin, ensure you have the following:

- A set of machines (virtual or physical) to serve as the control plane and worker nodes

- Static or DHCP reserved IP addresses for each node. We need this to avoid kubelet re-registration and general stability of the control plane.

- SSH access to each node

- Control workstation to connect to the nodes

Machine Setup

I used three machines running Ubuntu 24.04 Server, with the following specifications:

| Machine | Hostname | IP | Hardware |

|---|---|---|---|

| Control Plane | cp1 | 192.168.0.151 | 4 vCPUs, 6GB RAM, 50GB Disk |

| Worker Node 1 | wp1 | 192.168.0.152 | 8 vCPUs, 8GB RAM, 50GB Disk |

| Worker Node 2 | wp2 | 192.168.0.153 | 8 vCPUs, 8GB RAM, 50GB Disk |

Overview

The setup process involves the following steps:

- Setting up a Kubernetes Cluster the Hard Way with kubeadm (GitOps Series, Part 1)

- Part 1: Setting up the Kubernetes cluster from scratch using kubeadm

Setting up the Kubernetes cluster from scratch using kubeadm

Installing kubeadm and joining worker nodes

Installing Dependencies

Important: If not otherwise specified, the commands in this guide should be run on all nodes (control plane and worker nodes).

Before we start, we need to install some dependencies on all nodes. SSH into each node and run the following commands:

# Install dependencies

sudo apt-get update -qq

sudo apt-get install -y lsb-release tmux bash-completion vim git \ # Essential tools

curl wget net-tools iputils-ping \ # Networking tools

apt-transport-https software-properties-common ca-certificates \ # Package management tools

jq yq nfs-common \ # JSON and YAML processing tools, NFS client to mount NFS shares in pods

--no-install-recommends -qq

# Configuring the shell

echo "set -g mouse on" > ~/.tmux.conf # Enable mouse support in tmux

echo "set -g default-terminal screen-256color" >> ~/.tmux.conf # Set default color terminal

echo 'if [ -z "$TMUX" ] && [ "$TERM_PROGRAM" != "vscode" ] && [ -z "$SESSION_MANAGER" ]; then tmux attach -t default || tmux new -s default; fi' >> /home/${USER}/.bashrc # Attach to tmux session on login when not in VSCode

Configuring Kernel Modules and Installing Container Runtime

Kubernetes requires certain kernel modules enabled and swapping disabled. Furthermore, a container runtime is needed to run containers, for this setup we will use containerd.

# Disable swap

sudo swapoff -a && sed -i 's/\/swap/#\/swap/' /etc/fstab

# Load required kernel modules

sudo modprobe overlay # Overlay filesystem for container images

sudo modprobe br_netfilter # Bridge networking for containers

# Enable ip tables for bridge networking

sudo tee /etc/sysctl.d/kubernetes.conf <<EOF

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

EOF

sysctl --system # Apply sysctl settings

Next, we will install containerd, which is the container runtime I am using for Kubernetes.

sudo apt-get -qq install -y containerd || apt-get satisfy "containerd (>= 1.6.15)"

# Generate default configuration for containerd

sudo mkdir -p /etc/containerd && containerd config default | tee /etc/containerd/config.toml

# Configure containerd to use systemd cgroup driver

sudo sed -e 's/SystemdCgroup = false/SystemdCgroup = true/g' -i /etc/containerd/config.toml

# Restart containerd service to apply changes

sudo systemctl restart containerd

Installing kubeadm, kubelet, and kubectl

Now we will install the Kubernetes components: kubeadm, kubelet, and kubectl. These tools are essential for managing the Kubernetes cluster.

kubeadmis used to bootstrap the clusterkubeletis the agent that runs on each node and manages the containers as a servicekubectlis the command-line tool to interact with the cluster

KUBE_VERSION="1.33"

curl -fsSL https://pkgs.k8s.io/core:/stable:/v${KUBE_VERSION}/deb/Release.key | sudo gpg --dearmor -o /etc/apt/keyrings/kubernetes-apt-keyring.gpg

echo "deb [signed-by=/etc/apt/keyrings/kubernetes-apt-keyring.gpg] https://pkgs.k8s.io/core:/stable:/v${KUBE_VERSION}/deb/ /" | sudo tee /etc/apt/sources.list.d/kubernetes.list

apt-get update -qq

# Get the latest patch version for the specified KUBE_VERSION

LATEST_VERSION=$(apt-cache madison kubelet | grep "${KUBE_VERSION}" | head -n1 | awk '{print $3}')

apt-get install -y kubelet=${LATEST_VERSION} kubeadm=${LATEST_VERSION} kubectl=${LATEST_VERSION} -q

apt-mark hold kubelet kubeadm kubectl

crictl config --set runtime-endpoint=unix:///run/containerd/containerd.sock

Configuring the Control Plane Node

Important: The commands of this section should only be run on the control plane node.

Now that we have installed the necessary components, we can configure the control plane node. This involves initializing the cluster and setting up essential tools like Helm and Kustomize.

Installing Helm and Kustomize (optional)

# Install Helm

curl -s https://raw.githubusercontent.com/helm/helm/master/scripts/get-helm-3 | bash

# Install Kustomize

curl -s "https://raw.githubusercontent.com/kubernetes-sigs/kustomize/master/hack/install_kustomize.sh" | bash

Using kubeadm to bootstrap to Control Node

Before we can initialize we need to:

- Know the interface name (e.g.,

eth0,ens33, etc.) that will be used for the Kubernetes API server. - Know the IP address of the control plane node.

- Define the pod network CIDR (e.g.,

10.0.0.0/16) that will be used for the cluster.

Below we assume that the information is stored in the corresponding variables:

INTERFACE="eth0"

CONTROL_PLANE_IP="192.168.0.151"

POD_NETWORK_CIDR="10.0.0.0/16"

KUBEADM_CONFIG="/tmp/kubeadm-config.yaml"

# Create a host entry for the control plane node

echo "${CONTROL_PLANE_IP} cp1" | sudo tee -a /etc/hosts

# Create the kubeadm configuration file

cat << EOF > ${KUBEADM_CONFIG}

---

apiVersion: kubeadm.k8s.io/v1beta3

kind: ClusterConfiguration

kubernetesVersion: v${LATEST_VERSION}

controlPlaneEndpoint: cp1:6443

networking:

podSubnet: ${POD_NETWORK_CIDR}

---

apiVersion: kubeadm.k8s.io/v1beta4

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: ${CONTROL_PLANE_IP}

bindPort: 6443

nodeRegistration:

kubeletExtraArgs:

- name: node-ip

value: ${CONTROL_PLANE_IP}

EOF

kubeadm init --config=${KUBEADM_CONFIG} --upload-certs | tee kubeadm-init.out

complete kubeadm init output

[init] Using Kubernetes version: v1.33.0

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [cp1 kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.0.151]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [cp1 localhost] and IPs [192.168.0.151 127.0.0.1::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [cp1 localhost] and IPs [192.168.0.151 127.0.0.1::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "super-admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests"

[kubelet-check] Waiting for a healthy kubelet at http://127.0.0.1:10248/healthz. This can take up to 4m0s

[kubelet-check] The kubelet is healthy after 500.466649ms

[control-plane-check] Waiting for healthy control plane components. This can take up to 4m0s

[control-plane-check] Checking kube-apiserver at https://192.168.0.151:6443/livez

[control-plane-check] Checking kube-controller-manager at https://127.0.0.1:10257/healthz

[control-plane-check] Checking kube-scheduler at https://127.0.0.1:10259/livez

[control-plane-check] kube-controller-manager is healthy after 2.683405608s

[control-plane-check] kube-scheduler is healthy after 2.876555403s

[control-plane-check] kube-apiserver is healthy after 4.501583542s

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Storing the certificates in Secret "kubeadm-certs" in the "kube-system" Namespace

[upload-certs] Using certificate key:

8b519600195d3268e7c5588975812b355e68531102c8bff3b66ad60f0d8f06c9

[mark-control-plane] Marking the node cp1 as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node cp1 as control-plane by adding the taints [node-role.kubernetes.io/control-plane:NoSchedule]

[bootstrap-token] Using token: qojgig.d45q3p6mq43a9pgi

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of control-plane nodes running the following command on each as root:

kubeadm join cp1:6443 --token qojgig.d45q3p6mq43a9pgi \

--discovery-token-ca-cert-hash sha256:78d97189f734d2f8e462d6af99d56973130688b1beafd81235bd48237e290572 \

--control-plane --certificate-key 8b519600195d3268e7c5588975812b355e68531102c8bff3b66ad60f0d8f06c9

Please note that the certificate-key gives access to cluster sensitive data, keep it secret!

As a safeguard, uploaded-certs will be deleted in two hours; If necessary, you can use

"kubeadm init phase upload-certs --upload-certs" to reload certs afterward.

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join cp1:6443 --token qojgig.d45q3p6mq43a9pgi \

--discovery-token-ca-cert-hash sha256:78d97189f734d2f8e462d6af99d56973130688b1beafd81235bd48237e290572

This will take a while and once it is done you will see a message similar to the one below:

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of control-plane nodes running the following command on each as root:

kubeadm join cp1:6443 --token qojgig.d45q3p6mq43a9pgi \

--discovery-token-ca-cert-hash sha256:78d97189f734d2f8e462d6af99d56973130688b1beafd81235bd48237e290572 \

--control-plane --certificate-key 8b519600195d3268e7c5588975812b355e68531102c8bff3b66ad60f0d8f06c9

Please note that the certificate-key gives access to cluster sensitive data, keep it secret!

As a safeguard, uploaded-certs will be deleted in two hours; If necessary, you can use

"kubeadm init phase upload-certs --upload-certs" to reload certs afterward.

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join cp1:6443 --token qojgig.d45q3p6mq43a9pgi \

--discovery-token-ca-cert-hash sha256:78d97189f734d2f8e462d6af99d56973130688b1beafd81235bd48237e290572

Note: Make sure to copy the kubeadm join command for worker nodes, as you will need it later to join the worker nodes to the cluster.

Great stuff!

Now we will setup the kubeconfig file for the admin user to access the cluster on the control node.

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Note: To gain access to the cluster from the control workstation you can copy the kubeconfig file from the control plane to the workstation. We will set this up in Remote access to the cluster section later on with a dedicated admin user.

Using kubeadm to bootstrap to Worker Nodes

Important: The commands of this section should only be run on the worker plane nodes.

Now that the control plane is set up, we can join the worker nodes to the cluster. SSH into each worker node and configure the hosts file, then run the kubeadm join command that was provided at the end of the kubeadm init output on the control plane node.

echo "192.168.0.151 cp1" | sudo tee -a /etc/hosts

kubeadm join cp1:6443 --token qojgig.d45q3p6mq43a9pgi \

--discovery-token-ca-cert-hash sha256:78d97189f734d2f8e462d6af99d56973130688b1beafd81235bd48237e290572

Testing the Cluster Setup

After joining the worker nodes, you can verify the cluster setup by running the following command on the control plane node:

kubectl get nodes

You will see output similar to:

NAME STATUS ROLES AGE VERSION

cp1 Ready master 10m v1.33.1

wp1 Ready <none> 5m v1.33.1

wp2 Ready <none> 5m v1.33.1

Remote access to the cluster

We can now use kubectl inside our control plane! As it is not always convenient to SSH into the control plane node and manage our git repositories there, we will set up remote access to the cluster from our control workstation.

User creation using openssl

Now we will create an additional admin user. For this, we will use OpenSSL to generate a new certificate and key pair.

Important: Execute this on the control plane node.

# Create a user certificate for the admin user

sudo mkdir -p /etc/kubernetes/pki/users

sudo openssl genrsa -out /etc/kubernetes/pki/users/admin.key 2048

sudo openssl req -new -key /etc/kubernetes/pki/users/admin.key \

-out /etc/kubernetes/pki/users/admin.csr -subj "/CN=admin/O=system:masters"

# Sign with cluster CA and include clientAuth EKU

sudo openssl x509 -req -in /etc/kubernetes/pki/users/admin.csr \

-CA /etc/kubernetes/pki/ca.crt -CAkey /etc/kubernetes/pki/ca.key -CAcreateserial \

-out /etc/kubernetes/pki/users/admin.crt -days 999999

# temporarily set 644 permissions for admin.key so we can scp it later

sudo chmod 644 /etc/kubernetes/pki/users/admin.key

# Once you have copied the certificates to your workstation, you can set the permissions back to 600

sudo chmod 600 /etc/kubernetes/pki/users/admin.key

To access the cluster remotely, you need to create a kubeconfig file that contains the necessary credentials and cluster information. This file will allow you to interact with the cluster from your control workstation.

Important: Execute this on your control workstation.

CONTROL_PLANE_IP="192.168.0.151"

# Create a host entry for the control plane node

echo "${CONTROL_PLANE_IP} cp1" | sudo tee -a /etc/hosts

mkdir -p $HOME/.kube/users/admin

scp user@cp1:/etc/kubernetes/pki/ca.crt $HOME/.kube/users/admin/

scp user@cp1:/etc/kubernetes/pki/users/admin.crt $HOME/.kube/users/admin/

scp user@cp1:/etc/kubernetes/pki/users/admin.key $HOME/.kube/users/admin/

Caution: This will overwrite an existing kubeconfig file.

cat <<EOF | tee $HOME/.kube/config

apiVersion: v1

clusters:

- cluster:

server: https://cp1:6443

certificate-authority: ./users/admin/ca.crt

name: homelab

contexts:

- context:

cluster: homelab

user: admin

name: homelab-admin@homelab

current-context: homelab-admin@homelab

kind: Config

preferences: {}

users:

- name: admin

user:

client-certificate: ./users/admin/admin.crt

client-key: ./users/admin/admin.key

EOF

You should now be able to perform kubectl commands from your control workstation. For example, you can check the nodes in the cluster:

kubectl get nodes

You will see output similar to:

NAME STATUS ROLES AGE VERSION

cp1 Ready master 10m v1.33.1

wp1 Ready <none> 5m v1.33.1

wp2 Ready <none> 5m v1.33.1

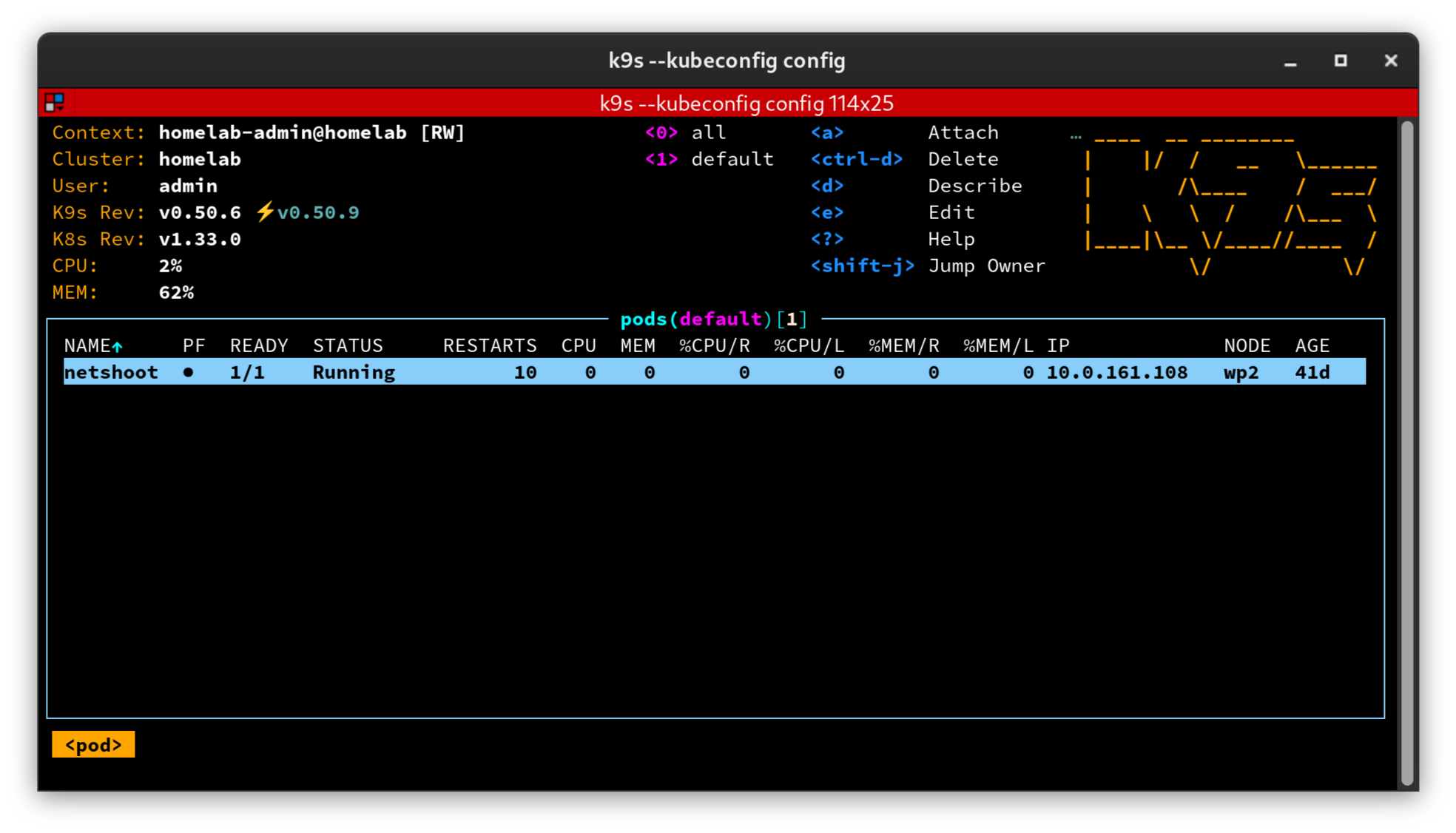

Setting up a k8s IDE

Now that we have setup the kubeconfig file, we can use a Kubernetes IDE to manage our cluster more easily. My favorite is k9s. We will download the latest release from github and install it.

pushd /tmp

wget https://github.com/derailed/k9s/releases/latest/download/k9s_linux_amd64.deb

sudo apt install ./k9s_linux_amd64.deb

rm k9s_linux_amd64.deb

popd

Now you will be able to dive into the magical world of kubernetes TUI with k9s.

When you run k9s --kubeconfig $HOME/.kube/config you will see the following:

Installing Helm and Kustomize

Helm and Kustomize are essential tools for managing Kubernetes applications.

Their main features are:

- Helm: Is a package manager for Kubernetes, allowing you to parameterise, install, and upgrade even the most complex Kubernetes applications.

- Kustomize: Is a tool for customizing Kubernetes YAML configurations, allowing you to manage different environments (e.g., development, staging, production) with ease.

In the later parts of this series, we will use them extensively to deploy and manage applications in our cluster via ArgoCD. However, it’s important to be able to render the charts locally to verify their correctness before deploying.

# Install Helm

curl -s https://raw.githubusercontent.com/helm/helm/master/scripts/get-helm-3 | bash

# Install Kustomize

curl -s "https://raw.githubusercontent.com/kubernetes-sigs/kustomize/master/hack/install_kustomize.sh" | bash

Summary

In this first part of the GitOps homelab series, we successfully:

- Prepared the host systems - Installed dependencies, configured kernel modules, and set up containerd as the container runtime

- Bootstrapped the Kubernetes cluster - Used kubeadm to initialize the control plane and join worker nodes

- Configured remote access - Created admin user credentials and kubeconfig for managing the cluster from your workstation

- Installed management tools - Set up k9s for cluster visualization, plus Helm and Kustomize for application deployment

At this point, you have a fully functional Kubernetes cluster ready for the next steps. However, the cluster still lacks essential components like:

- A Container Network Interface (CNI) plugin for pod networking

- A load balancer for exposing services

- Storage provisioners for persistent data

- Secret management for secure application configuration

These will be covered in Part 2: Deploying Core Infrastructure and Tools, where we’ll set up the complete infrastructure stack including Calico, MetalLB, ArgoCD, Vault, and more.

Next: Part 2 - Deploying Core Infrastructure applications and cluster tools →